-

Notifications

You must be signed in to change notification settings - Fork 3.6k

bug fix in from_datasets method #14082

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

|

I don't think it's a bug since |

| return dataloader(predict_dataset) | ||

|

|

||

| datamodule = cls() | ||

| datamodule = cls(batch_size=batch_size) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

We can't assume the datamodule takes a batch_size argument in general. In which library did you find this problem?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

What do you mean which library? pytorch lightning library.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

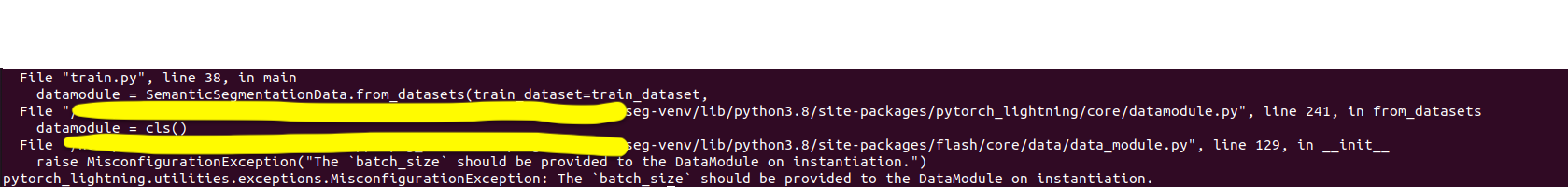

I tried to use SemanticSegmentationData.from_dataset method

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

good catch, in that case we need to add another **kwargs parameter and check if the existing ones are part of it or not.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

From skimming the flash.DataModule source code, it doesn't look like this method is supposed to be compatible with it, as it already manages creating the DataLoaders etc.

Thoughts @krshrimali?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Flash has an extra parameter **datamodule_kwargs in their special from_* methods. But this is unfortunately not a universal solution, because a parameter like batch_size, which is also used as a parameter by the from_dataset method, can't be passed in "twice" as kwargs. There are some solutions

- use a proper dict datamodule_kwargs as parameter instead of kwargs. But this would be inconsistent with how Flash handles it.

- Have datamodule_kwargs as proper kwargs, but do a signature inspection for the init to know whether you need to pass in parameters like batch_size

- Override the from_datasets method in FlashDatamodule and raise an error that this method is not supported.

- Something else?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

i'd say 2

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Implemented in #14185

fcfce83 to

b04549d

Compare

|

closing in favor of #14185 |

What does this PR do?

Fixes #<issue_number>

Does your PR introduce any breaking changes? If yes, please list them.

Before submitting

PR review

Anyone in the community is welcome to review the PR.

Before you start reviewing, make sure you have read the review guidelines. In short, see the following bullet-list:

Did you have fun?

Make sure you had fun coding 🙃