-

Notifications

You must be signed in to change notification settings - Fork 3.6k

Temporarily pin docker image used in GPU CI #13218

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

|

Just realised that there seems another issue 😞 |

|

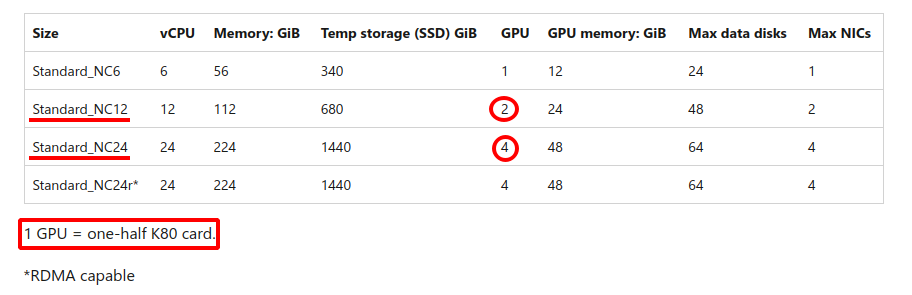

Might be caused by the fact that @Borda recently switched to K80 GPUs. I don't think this is a problem in our codebase. |

for TM we just migrated to use LTS with CUDA 10.2, see Lightning-AI/torchmetrics#1071 but the error was different |

|

@Borda Thank you for working on the investigation of the above error in #13245. Looked for similar issues on GH and SO, but all I could find so far is only NVIDIA/MinkowskiEngine#235 (K80 and CUDA 10.2) which has no solution... |

|

I think we can close it as it turned out the failing builds were unrelated to the used docker image but to the recent switch of resources... the NC12 used in the last few days has been likely sharing GPU with other machines... so switch to NC24 seem to resolve the issue 🐰 |

@Borda Does it? It looks like switching from NC12 to NC24 didn't help as we're still seeing the same error in #13245. https://dev.azure.com/PytorchLightning/pytorch-lightning/_build/results?buildId=74406&view=logs&j=8ef866a1-0184-56dd-f082-b2a5a6f81852&t=4006504a-0df6-59ce-b931-f083a06c2a9c |

|

well, not sure then, but for while, all was almost fine (just one normally failing test) on master |

What does this PR do?

Pins the docker version to make some time to fix the following issues.

Failures

Does your PR introduce any breaking changes? If yes, please list them.

None

Before submitting

PR review

Anyone in the community is welcome to review the PR.

Before you start reviewing, make sure you have read the review guidelines. In short, see the following bullet-list:

Did you have fun?

Make sure you had fun coding 🙃

cc @tchaton @rohitgr7 @carmocca @akihironitta @Borda